AI Policy in the U.S.: Where Are We Now?

The White House recently released its’ National Policy Framework for Artificial Intelligence, which contributes to the ongoing debate over how the United States should address AI policy. This legislative blueprint, issued under President Trump, urges Congress to adopt a federally unified, “light-touch” approach—one that prioritizes innovation, preempts state AI laws, and relies on existing legal regimes rather than creating new regulations.

Let’s break down what the framework includes.

The White House AI Policy Blueprint: Key Recommendations

The White House’s framework outlines seven core legislative recommendations for Congress:

Preemption of State AI Laws: The administration argues that AI is an “inherently interstate phenomenon” with national security and foreign policy implications, and thus should be governed by a single federal standard. The framework explicitly calls for Congress to preempt state laws that conflict with federal policy, citing concerns that a patchwork of state regulations would stifle innovation and create compliance burdens for businesses operating across state lines. Additionally, developers should not be held liable for how third parties illegally use their models. The administration believes the national AI standard should preserve state authority over law enforcement, consumer protection, zoning, and their own use of AI—respecting federalism and not overriding state powers in these areas.

Protecting Children and Empowering Parents: The framework recommends giving parents tools to manage their children’s digital environment, such as account controls to protect privacy and manage device use. It also calls for federal legislation to prevent AI-generated child sexual abuse material, but stops short of mandating broad content moderation or platform liability. Lastly, it encourages Congress to ensure that it does not preempt states from enforcing their own generally applicable laws protecting children.

Copyright and Creator Protections: The administration acknowledges the need to protect creators’ voices and likenesses but maintains that AI scraping of copyrighted material for training purposes does not constitute a violation. The administration acknowledges that this perspective is contested and calls for letting the courts resolve the matter. The administration also calls on Congress to create federal protections against unauthorized commercial use of AI-generated digital replicas of people’s voice, likeness, or identity, but must include clear First Amendment exceptions for parody, satire, and news—ensuring these rules aren’t misused to censor free speech online.

Energy and Infrastructure: To prevent electricity costs from surging due to AI data centers, and to speed up innovation, the framework calls for streamlined permitting and codification of a voluntary “Ratepayer Protection Pledge” signed by major tech companies, ensuring they cover the full costs of their energy use.

Workforce Development and AI Fluency: The framework urges Congress to expand AI training in education and workforce programs—such as apprenticeships—using non-regulatory methods to prepare workers for an AI-driven economy. It also encourages Congress to study AI’s impact on job roles to guide policy, and to support land-grant institutions in providing technical assistance, pilot projects, and youth AI programs.

Free Speech and Anti-Censorship: The federal government is asked to protect free speech and First Amendment rights by ensuring AI systems are not used to suppress lawful political expression or dissent. To achieve this, the administration recommends Congress prohibit government coercion of tech and AI providers to censor, ban, or manipulate content based on partisan or ideological motives. Additionally, they believe Americans should have legal avenues to challenge and seek redress for any federal agency actions that attempt to censor speech or control information on AI platforms.

National Security and Innovation: The administration seeks to remove barriers to AI innovation, accelerate deployment across industries, and ensure American dominance in the global AI race, particularly against China. It recommends Congress create AI regulatory sandboxes to foster innovation and maintain U.S. leadership in AI, while making federal datasets more accessible for AI training. The administration believes lawmakers should rely on existing sector-specific regulators and industry standards to guide AI development and deployment.

Strengthening American Communities and Small Businesses: The framework states that AI development should boost U.S. economic growth, energy leadership, and small businesses—while protecting communities from harm. It calls upon Congress to combat AI scams, support small businesses with grants and technical assistance, and prevent AI data centers from raising household electricity costs (as mentioned earlier).

Why Past National AI Policy Efforts Have Failed

The U.S. has struggled for years to craft comprehensive AI legislation. Several factors have contributed to these failures:

Lack of Congressional Consensus: Despite bipartisan interest, Congress has repeatedly failed to pass broad AI or privacy laws. Attempts to include sweeping state AI preemption measures in must-pass legislation, such as the budget or National Defense Authorization Act (NDAA), have been stripped out due to bipartisan opposition.

Disagreement on AI Policy Approach: Tech companies and venture capital firms have asked for federal preemption, arguing that state laws create a burdensome patchwork. While, states and civil society groups have argued that federal inaction has left a regulatory vacuum, and that states have a right to protect their citizens.

Complexity and Rapid Change: AI is a multi-faceted, fast-evolving technology. Crafting legislation that is both effective and flexible has proven difficult. Past efforts have either been too broad (and thus unpassable) or too narrow (and thus ineffective).

Self-Regulation Has Not Worked in Some Instances: The tech industry’s voluntary commitments and self-regulation have failed to address public concerns about safety, transparency, and ethical design in some instances. The absence of federal oversight has led to a proliferation of state laws, each addressing different aspects of AI risk.

States Rights Hurdles: Many governors and other policymakers see states as laboratories for innovation in tech policy, and do not want to cede that ground to what they see as federal overreach. However, those in favor of a national framework argue that Congress is granted prevue over the matter in Article I, Section 8 of the Constitution as it impacts commerce.

Recent State AI Laws: What Are They Addressing?

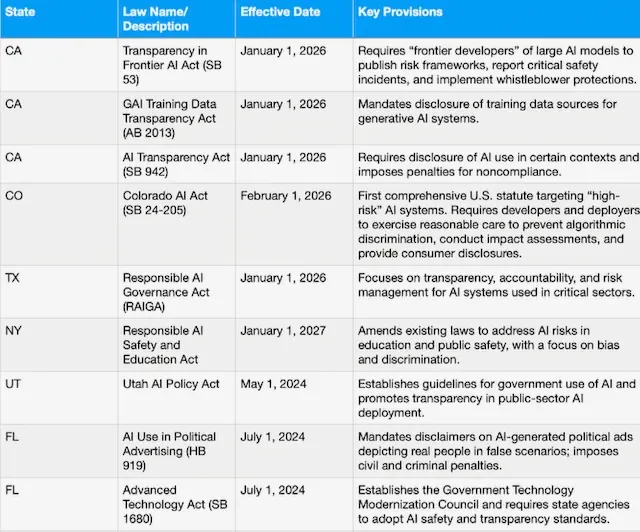

States have moved quickly to regulate AI, with over 1,000 AI-related bills introduced in 2025 alone, and several states have already enacted significant laws, effective in 2026:

Recent State AI Laws

These laws generally focus on:

Transparency: Requiring disclosure of AI use, training data, and decision-making processes.

Anti-Discrimination: Mandating impact assessments and reasonable care to prevent algorithmic bias.

Consumer Protection: Ensuring accountability for AI-driven harms and providing avenues for redress.

Public Sector Use: Setting standards for government deployment of AI, especially in high-stakes areas like hiring, policing, and education.

The White House framework specifically targets laws like Colorado’s AI Act, which it views as overly burdensome and inconsistent with its innovation-first approach.

What’s Not Included in the White House Framework?

While the White House framework addresses several issues, it doesn’t include some protections found in state level actions and alternative policy proposals :

Comprehensive Privacy Protections: The framework does not propose new federal privacy laws or robust data protection standards that address AI’s potential impact on personal privacy.

Algorithmic Discrimination and Civil Rights: The proposed framework lacks enforceable safeguards against algorithmic discrimination in areas like housing, employment, lending, and criminal justice, sectors where AI bias has been well-documented (Raghavan, M., Barocas, S., Kleinberg, J., & Levy, K., 2020). There is also no discussion of protected categories such as race, sex, or national origin under Title VII, and age under the Age Discrimination in Employment Act (ADEA).1

Workforce Protections: The focus on workforce development is limited to education and training. There are no proposals to address AI-driven job displacement or wage suppression.

Environmental Impact: Beyond energy costs, the framework does not address the broader environmental impact of AI, such as water usage by data centers or the carbon footprint of large-scale AI training.

Accountability and Liability: The framework opposes holding AI developers liable for third-party misuse of their models, which advocates argue could erode consumer trust in AI technologies and governance.

What Do You Think?

What is the optimal balance between innovation and protection in the age of AI? What would you like to see Congress address as it relates to federal AI policy?

REFERENCES

Dastin, J. (2018, October 10).Amazon scraps secret AI recruiting tool that showed bias against women. Reuters. Link: Article.

The White House. (2026). National policy framework for artificial intelligence: Legislative recommendations. Link: Article.

Raghavan, M., Barocas, S., Kleinberg, J., & Levy, K. (2020).Mitigating bias in algorithmic hiring: Evaluating claims and practices. Science, 367(6484), 1246–1251. Link: Article.